SLV Adds Support for the SHA-256 Optimization Patch That Accelerates Solana Validator PoH (Known as the kagren Patch) — 10–20% PoH Speed Check Improvement, Delivering Epics DAO Validator Operational Insights to All SLV Users via AI Agents

SLV Adds Support for the SHA-256 Optimization Patch That Accelerates Solana Validator PoH (Known as the kagren Patch) — 10–20% PoH Speed Check Improvement, Delivering Epics DAO Validator Operational Insights to All SLV Users via AI Agents

ELSOUL LABO B.V. (Headquarters: Amsterdam, Netherlands; CEO: Fumitake Kawasaki) and Validators DAO are pleased to announce that SLV, their jointly developed and operated open-source Solana development tool, now supports an optimization patch (known as the kagren patch) that accelerates the SHA-256 computation used by Solana validator PoH (Proof of History).

Following real-world validation on the Epics DAO validator, the patch has been integrated into SLV in a form that applies to both Solana validators and Solana RPC nodes, and can be applied by any SLV user through nothing more than a conversation with an AI agent.

On AMD Zen3 or newer CPUs equipped with SHA-NI instructions, a 10–20% improvement in the PoH speed check measured at startup is expected. This performance gain translates directly into additional processing headroom during leader slots on Solana validators.

SLV Website: https://slv.dev/en

SLV GitHub: https://github.com/validatorsDAO/slv

What Is the kagren Patch? — Precise Optimization of the Hottest Path in Solana Validators

Solana's consensus is built on a continuous SHA-256 hash chain known as Proof of History (PoH). The process of taking the previous hash (32 bytes) as input to generate the next hash is repeated hundreds of thousands of times within a single slot (approximately 400 ms). Among all code paths in a Solana validator, this PoH SHA-256 computation is the most frequently executed and is the dominant consumer of CPU time.

The so-called "kagren patch" is a targeted effort to optimize this hottest path. Its original author, kagren, has forked the sha256-hasher from solana-sdk and provides a SHA-NI implementation specialized for PoH's input condition of 32 bytes in a single block.

This patch is released under the Creative Commons CC0 1.0 Universal License, allowing anyone to freely use, modify, and redistribute it. We extend our sincere respect to kagren for this contribution, released openly to the Solana ecosystem.

solana-sha256-hasher-optimized (kagren): https://github.com/kagren/solana-sha256-hasher-optimized

SHA-NI Instructions and Deterministic Optimization for 32-Byte, Single-Block Input

SHA-256 is an algorithm that processes data in 64-byte (512-bit) blocks. When hashing a 32-byte input, the remaining 32 bytes are filled with specification-defined padding — a leading 0x80 byte, zero padding, and a trailing bit sequence representing the input length.

The key observation is this: when hashing is always performed on 32 bytes in a single block, as in PoH, this padding is entirely deterministic. The kagren patch unfolds these deterministic portions ahead of time along the SHA-NI computation path, stripping out the branches, loops, and loads that were present in the general-purpose implementation. As a result, for PoH's specific input condition of 32 bytes and a single block, it extracts the maximum throughput from SHA-NI.

For inputs other than 32 bytes, or when spanning multiple blocks, the original general-purpose implementation continues to be used. On-chain SHA-256 computation (hash calls inside programs running on the SBF) is also left entirely unchanged. The optimization applies only to workloads that repeatedly compute single-block, 32-byte hashes — as in PoH — and has no effect on any other path.

10–20% Improvement in PoH Speed Check — Direct Impact on Leader Slot Processing Headroom

On AMD Zen3 or newer CPUs, the PoH speed check value measured at Solana validator startup has been reported by the original author to improve by 10–20% after applying this patch. We observed a similar level of improvement in real-world validation on the Epics DAO validator.

The meaning of this improvement goes beyond a mere benchmark number. PoH computational headroom translates directly into the processing headroom a Solana validator has during its leader slots. Transaction ingestion, Compute Unit accumulation, block production — within the limited time available in a leader slot, reducing the CPU time consumed by PoH computation increases the resources available for every other task.

This is a quiet but reliable improvement that lifts the key performance indicators of a validator: vote latency, skip rate, and Compute Units per block.

No Impact on Consensus — Fully Compatible Fallback Design

SHA-256 computations under the kagren patch produce results identical to those of the standard implementation. The execution path branches based on the input condition: 32-byte, single-block inputs take the optimized route, while everything else falls back to the standard implementation. On-chain SHA-256 computation remains entirely unchanged.

There is no structural risk of consensus-level issues — such as a validator landing on a fork due to hash results disagreeing with other validators. Before deploying patched binaries, SLV runs a verification step to confirm result parity with the standard implementation, and only then proceeds with the switch-over.

Target CPUs and Prerequisites

This patch only delivers its benefit on CPUs equipped with the SHA-NI instruction set. Specifically, this means AMD Zen3 or later architectures — EPYC 7003 / 9004 / 9005 series, Ryzen 5000 series and later, Threadripper 5000 / 7000 series, and similar processors.

Most of the configurations used in Epics DAO validator operations and across the ERPC platform meet this condition, so the majority of ERPC's Solana RPC nodes and SLV Metal series servers can benefit from this patch. On older-generation CPUs without SHA-NI instructions, SLV skips the patch application and continues operation on the standard implementation.

Real-World Validation on the Epics DAO Validator — Another Building Block Behind Our World #3 Ranking

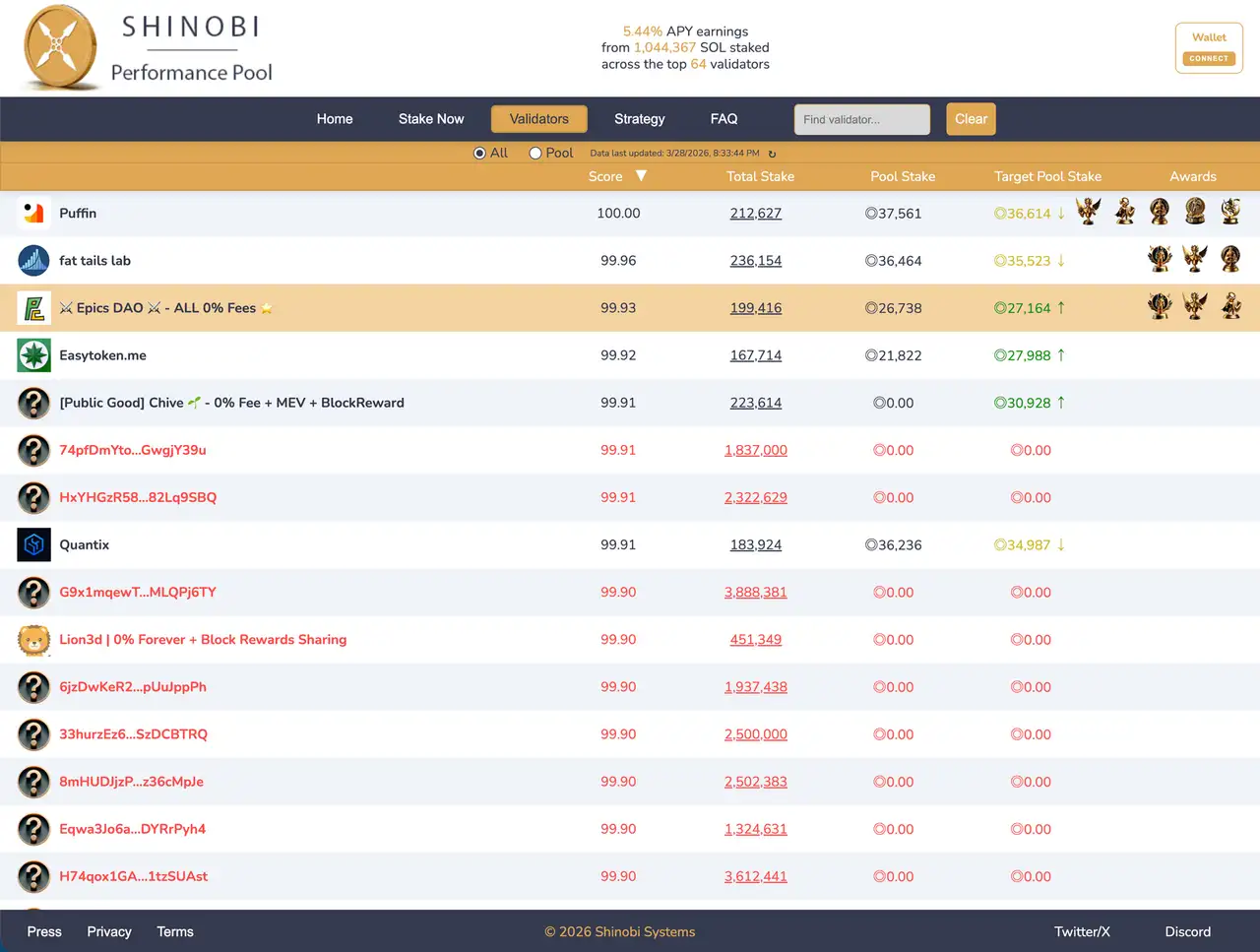

The Epics DAO validator, which we operate as the source for ERPC's SWQoS endpoints and Epic Shreds feed, has reached world rank #3 overall (score 99.93) among all Solana validators in the Shinobi Performance Pool. This result reflects the accumulation of multiple improvements: hardware selection, kernel parameter optimization, network stack tuning, IRQ affinity adjustment, and DoubleZero integration.

The kagren patch integration is another addition to this accumulation. After real-world validation on the Epics DAO validator confirmed both its effectiveness and stability in production, we incorporated it into SLV as a built-in skill. Optimization techniques proven by world-class validator operations are now available in a form that any SLV user can reproduce.

Validators DAO exists to raise the overall processing quality and fault tolerance of the Solana network. Performance improvements on individual validators translate directly into higher processing throughput across the entire Solana chain. An optimization that kagren released under CC0, validated on the Epics DAO validator, and delivered to validator operators worldwide through SLV — this cycle of giving knowledge back is at the core of our reason for being.

Validator and RPC Dual Support in SLV — Automatic Client Detection and Remote Build & Deploy

With this release, both Solana validators and Solana RPC nodes are covered as targets for patch application in SLV.

SLV automatically detects the client type running on the target node (Agave, Jito-Agave) and clones the appropriate Solana source tree into a remote build environment. The kagren patch repository is also fetched automatically, and the entire process — applying the patch to the PoH hashing logic, rebuilding with target-CPU optimization flags, backing up the existing binary, and deploying the patched binary — runs end-to-end under SLV's control.

The Solana source version can be specified explicitly or resolved automatically based on the version information managed by SLV. Bulk application across multiple nodes is also supported, covering the use case of rolling out the patch progressively across an operating fleet.

Note that after the patched binary has been deployed, restarting the Solana validator or RPC process is performed separately by the operator. SLV handles everything up to the binary swap; the timing of the restart is left to each operator's policy. When the SLV AI agent is in use, the restart itself can also be delegated to the agent.

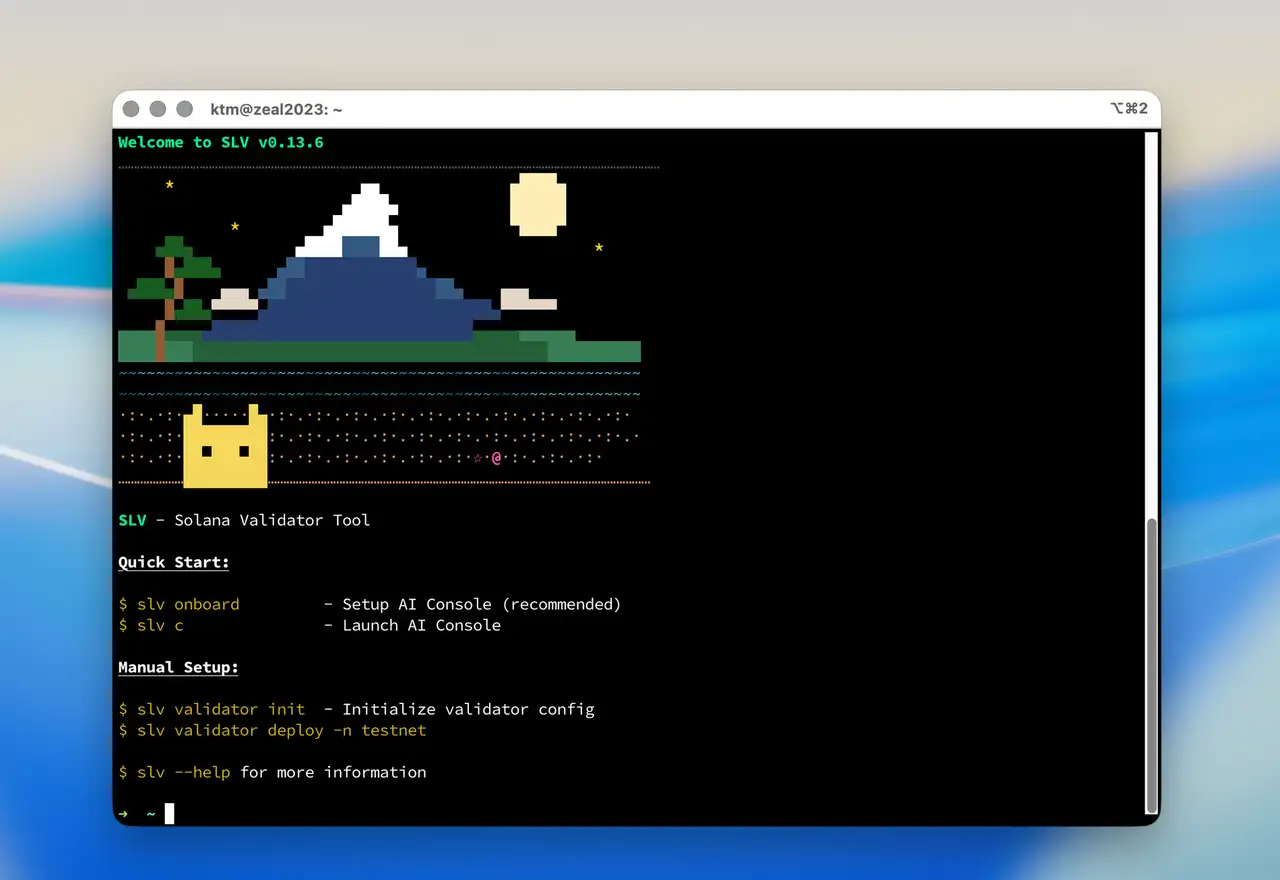

Combined with the AI Agent — Entirely Through Natural Language

Like the rest of SLV's functionality, kagren patch application is exposed through MCP (Model Context Protocol). By launching the AI Console and simply telling the AI agent something like "Apply the SHA-256 optimization patch to this validator", the entire flow — node identification, build, and deploy — is carried out by the agent, which selects and executes the appropriate steps.

Direct CLI execution is also supported, so the same operation can be incorporated into scripted automation flows. Whether the AI agent is used or not, the same behavior is reproduced on top of the same MCP foundation.

Until now, applying a custom patch to a Solana validator has required the operator to handle the entire sequence themselves: cloning the source code, setting up a build environment, resolving dependencies, integrating the patch, adjusting optimization flags, and swapping binaries. SLV abstracts this whole process into something the AI agent can perform on the operator's behalf.

A technology that an operator wants to adopt for performance improvement should never be held back by operational complexity — the policy SLV put forward with its DoubleZero support is continued here with the kagren patch.

Local Mode Support — From a Single Machine to an Entire Fleet

In addition to remote management, SLV supports a local mode in which SLV is run directly on a node reached via ssh. Kagren patch application works in local mode as well, so applying the patch directly to a single running node, or rolling it out across an entire fleet under an Ansible-based remote management setup, can both be completed within the same SLV environment.

For users migrating from solv, applying the kagren patch in local mode is an easy entry point. Start with one machine and scale to remote management as needed — SLV's overall design philosophy carries through consistently to the way performance tuning is introduced as well.

Contribution to the Solana Network as a Whole

Solana is a distributed computing network. Its performance is determined by the sum of the performance of every individual validator distributed around the world.

The 10–20% headroom gained by each validator in PoH computation accumulates, at the level of the whole network, as additional processing headroom during leader slots, better vote follow-up accuracy, and more stable block production. Delivering an optimization that kagren released under CC0 to more validators through an operational platform like SLV is a contribution to the performance and fault tolerance of the Solana network as a whole.

SLV will continue to provide improvements that matter for real-world operations in a form that can be applied through nothing more than a conversation with an AI agent. By structurally lowering the cognitive load of validator operations and reducing the barriers to the work required for performance improvement, we will keep building an environment in which more operators can run their validators at a higher quality level.

Delivered as Open Source — Continuing to Give Knowledge Back

SLV itself continues to be provided as open source. All of its functionality, including this kagren patch integration, is freely available from the SLV GitHub repository.

The knowledge gained from ERPC's real-world operations and R&D is published as open source through SLV's skills and tools. Optimization techniques, tuning parameters, and operational know-how accumulated along the road to reaching world rank #3 with the Epics DAO validator — all of this is concentrated in SLV's skills for its AI agents, in a form that any validator operator around the world can reproduce at the same quality level.

SLV Website: https://slv.dev/en

SLV GitHub: https://github.com/validatorsDAO/slv

Try It Right Now with SLV AI Tokens

Kagren patch application is also available as part of SLV's AI agent functionality. By using SLV AI tokens, the entire application work can be completed through natural language dialogue with the AI agent.

As a launch promotion, we are distributing 100,000 tokens free of charge with a €5 authorization — more than enough volume to experience applying the kagren patch through a conversation with the AI agent. Connections using ChatGPT and Claude API tokens are also supported, so users can also run SLV AI with their own API keys.

ERPC SLV AI Plans: https://erpc.global/en/price/

Combined with the ERPC Platform

All Solana validators and Solana RPC nodes running on the ERPC platform are built on AMD Zen4 or newer CPUs — configurations that benefit from the kagren patch. By deploying an environment built with SLV onto the ERPC platform, users get all of the following from day one: high-speed snapshot downloads within the platform, zero-distance communication with Solana validators, Solana-specific tuned configurations, and PoH acceleration via the kagren patch.

ERPC integrates Solana RPC, Solana Geyser gRPC, Solana Shredstream (Epic Shreds), bare-metal servers, high-performance VPS, and ERPC Global Storage into a single platform, with all services connected over internal network paths in a zero-distance design. DoubleZero's dedicated fiber network is also integrated across every region, with a particularly notable P99 latency reduction of around 200 ms in the Asia region (Tokyo and Singapore).

ERPC Website: https://erpc.global/en

Five Consecutive Years of WBSO Approval — AS200261 Solana-Dedicated Datacenter

ELSOUL LABO has received approval for five consecutive years since 2022 under WBSO, the research and development support program of the Dutch government. The results of continuous R&D on Solana RPC infrastructure, validator placement and operational orchestration, and the construction of AI-agent-driven Solana operations environments are implemented directly in SLV's toolset and AI agents.

As the culmination of this R&D, we are building a Solana-dedicated datacenter under our own ASN (AS200261), assigned by RIPE NCC. With hardware standardized on the latest generation — AMD EPYC 5th Gen, AMD Threadripper PRO 5th Gen (9975WX and above), and NVMe Gen 5 — combined with optimal network path design enabled by our own ASN, this facility delivers top-tier quality that surpasses existing premium datacenters. The opening is scheduled for this month, and it will support further acceleration of the environments that SLV AI agents build.

Acknowledgment to kagren

This integration into SLV would not have been possible without the work that kagren made public in the solana-sha256-hasher-optimized repository. We once again extend our deep respect and gratitude for this effort, released under CC0 as a contribution to the Solana ecosystem.

An improvement released as open source, delivered to validator operators around the world through another open source tool (SLV) — a cycle of shared knowledge like this is what makes the Solana ecosystem as a whole stronger. On our side, we will likewise continue giving back, through SLV, the knowledge we gain from operating the ERPC platform and the Epics DAO validator.

solana-sha256-hasher-optimized (kagren): https://github.com/kagren/solana-sha256-hasher-optimized

Contact

For inquiries about SLV and ERPC, please create a support ticket on the Validators DAO official Discord.

Validators DAO Official Discord: https://discord.gg/C7ZQSrCkYR

Links

- SLV Website: https://slv.dev/en

- SLV GitHub: https://github.com/validatorsDAO/slv

- ERPC Website: https://erpc.global/en

- ERPC SLV AI Plans: https://erpc.global/en/price/

- solana-sha256-hasher-optimized (kagren): https://github.com/kagren/solana-sha256-hasher-optimized

- Epics DAO Website: https://epics.dev/en

- Validators DAO Official Discord: https://discord.gg/C7ZQSrCkYR